Revolutionizing Design: An In-Depth Look at Claude Design by Anthropic Labs

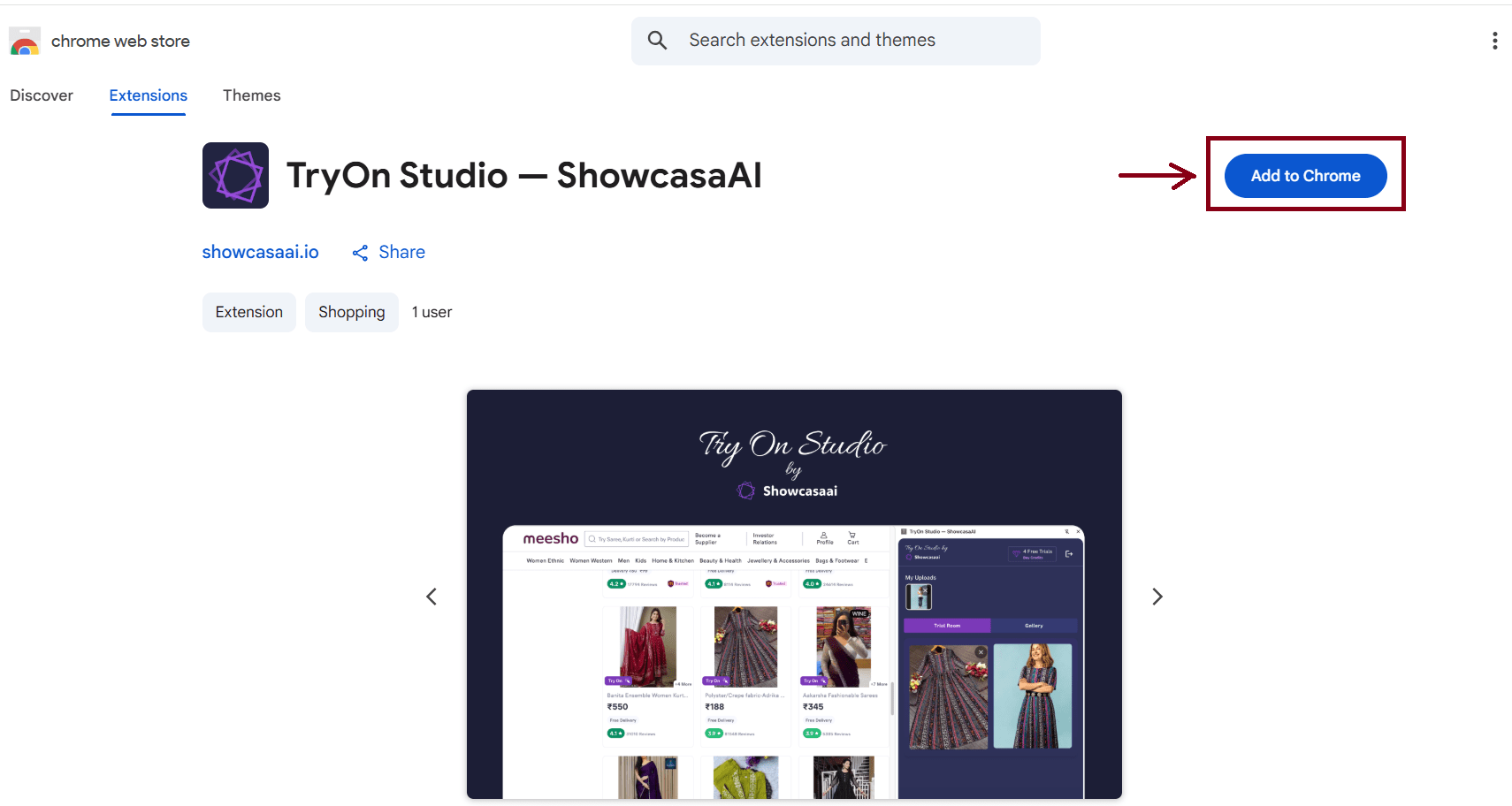

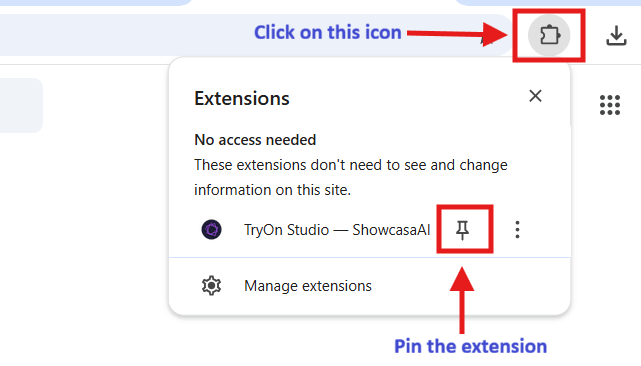

In the dynamic landscape of design and creative collaboration, Anthropic Labs has unveiled a groundbreaking tool — Claude Design. Launched with the aspiration to democratize design capabilities, Claude Design empowers users from various backgrounds to create, refine, and share polished visual work effortlessly. At its core, Claude Design is powered by the robust Claude Opus 4.7 vision model and is now available in a research preview for Claude Pro, Max, Team, and Enterprise subscribers. This strategic rollout aims to revolutionize how visual content is developed and shared within organizations.

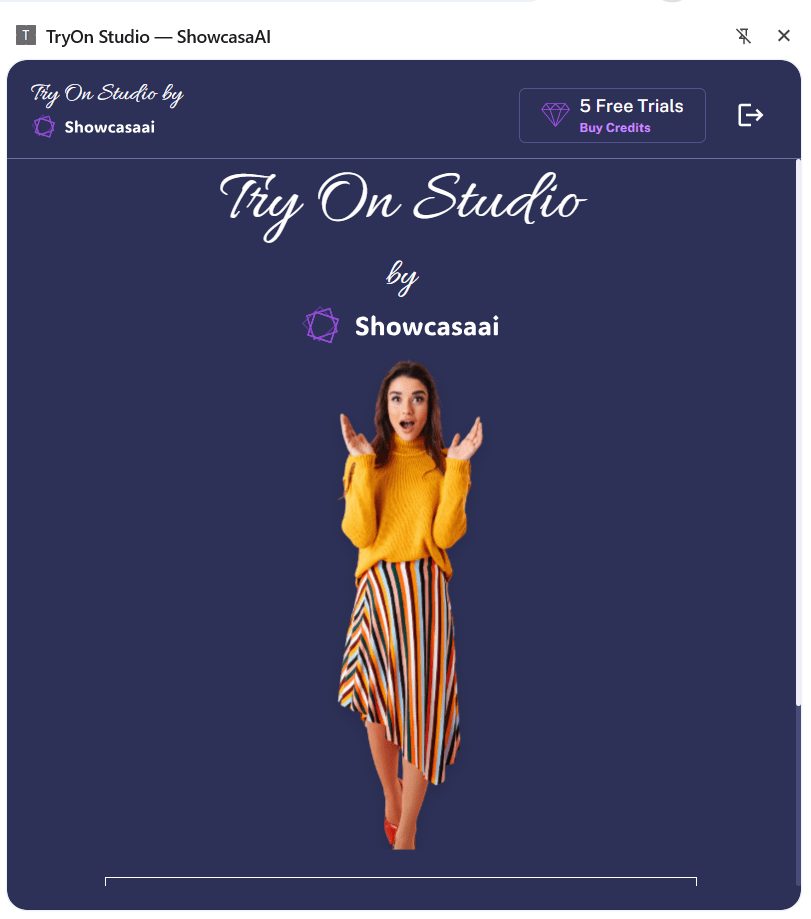

Claude Design addresses a critical challenge in the design process: the limitation of exploration due to time constraints. Traditionally, designers have had to ration their creative endeavors due to resource limitations, but Claude Design is set to change this narrative. It offers both experienced designers and non-designers, such as founders, product managers, and marketers, a comprehensive platform to turn their ideas into tangible visual assets. Starting with a simple descriptive input, the tool generates a preliminary design, which users can then refine through interactive conversations, inline comments, direct edits, or customized sliders. Moreover, Claude seamlessly aligns with your organization’s design system, ensuring visual consistency across all projects.

Among its diverse applications, Claude Design shines in creating realistic prototypes. Designers can transform static mockups into interactive prototypes with ease, facilitating user testing and feedback gathering without the need for a single line of code. Product Managers benefit similarly, as they can craft detailed product wireframes and mockups for further refinement or handoff to development teams via Claude Code.

The tool is equally transformative for founders and marketers. It enables the swift creation of on-brand pitch decks and presentations, starting from a rough outline to a polished product within minutes, complete with export options to PPTX and Canva. Marketing teams can produce compelling landing pages, social media assets, and campaign visuals in collaboration with designers.

Claude Design also breaks new ground in frontier design, offering a platform where anyone can build code-powered prototypes enriched with advanced features such as voice, video, shaders, 3D elements, and built-in AI. This feature positions Claude Design as a critical tool for innovative and tech-driven design solutions.

The operational flow of Claude Design is intuitive. Upon onboarding, Claude constructs a dedicated design system tailored to your team’s branding guidelines by scanning codebases and existing design files. This automation ensures that subsequent projects adhere to your brand’s color palette, typography, and design components. Furthermore, users can refine this system over time, maintaining multiple systems as needed.

Claude Design supports diverse starting points. Whether you begin from a text prompt, upload existing assets, or capture elements directly from your website, the tool integrates these seamlessly into your design workflow. Its fine-grained controls allow for precise adjustments, while its collaboration features enable seamless organizational sharing and editing.

Once your design reaches fruition, Claude Design facilitates easy sharing and export options, supporting formats like Canva, PDF, PPTX, and standalone HTML files. For development-ready designs, Claude packages all necessary elements into a handoff bundle for smooth transition to Claude Code, ensuring a seamless move from design to implementation.

Anthropic Labs is committed to expanding Claude Design’s integrations, allowing teams to synchronize with existing tools for an enhanced workflow. As accessibility broadens, organizations are invited to integrate Claude Design into their creative arsenal, harnessing its full potential to transform the way they visualize and actualize their ideas. For Enterprise users, Claude Design remains off by default until activated by an admin, underscoring tailored control and security within organizational settings. This strategic launch of Claude Design embodies a new era in design innovation, paving the way for creativity without constraints.

For more information on Claude Design by Anthropic Labs, visit the official article: Claude Design by Anthropic Labs.